Before starting your NLP journey, it is useful to meditate on two questions:

1. Is a unique NLP component critical for the core business of our company?

As an example, imagine you are an online bank. You want to optimise your customer service by classifying incoming customer requests with NLP. This enhancement can help you save costs but it will not be part of your core business activities. By contrast, a business in targeted advertising should make sure it does not fall behind on NLP — this could significantly weaken its competitive position.

2. Do we have the internal competence to be innovative in our NLP stack?

Another example: you hired and successfully integrated a PhD in Computational Linguistics and can grant her the freedom to design new solutions for your business issues — she will likely be motivated to enrich the intellectual property portfolio of your company. On the other hand, if you are hiring middle-level data scientists without a laser focus on language that need to split their time between data science and engineering tasks, don't expect a unique IP contribution. Your team will rather fall back on ready-made algorithms due to lack of time and mastery of the underlying details.

Now, two hints:

Hint 1: if your answers are "yes" and "no" — you are in corporate trouble! You'd better go one step back and identify technological differentiators that do match your core competence.

Hint 2: if your answers are "yes" and "yes" — stop reading and get to work. Your NLP roadmap should already be defined by your specialists to achieve the business- specific objectives.

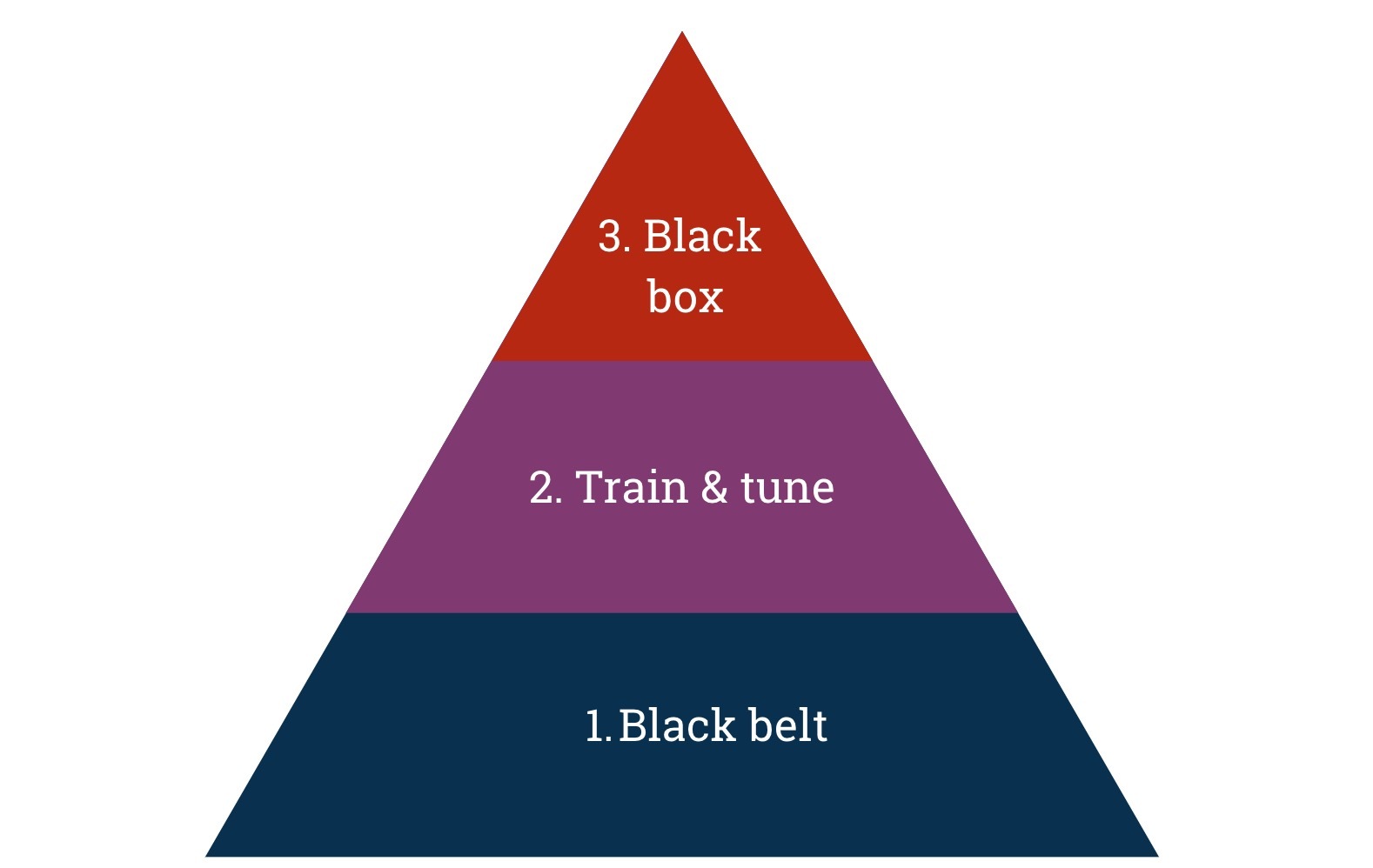

If you are still there, don't worry - the rest will soon fall in place. There are three levels at which you can "do NLP":

- Black belt level, reaching deep into mathematical and linguistic subtleties

- Training&tuning level, mostly plugging in existing NLP/ML libraries

- Black box level, relying on "buying" and integrating third-party NLP

Let's elaborate: the first, fundamental level is our "black belt" — it comes close to computational linguistics, the academic counterpart of NLP. The crowd here can be split into two camps — the mathematicians and the linguists. The camps might well befriend each other, but the mindsets and the way of doing things will still differ. The math guys are not afraid of things like matrix calculus and will strive on details of newest methods of optimisation and evaluation. At the peril of leaving out linguistic details, they will go for a myriad of technical tricks to improve the recall of your algorithms. The linguists were raised either on highly complex syntactic formalisms or alternative frameworks such as cognitive grammar, which give more room to imagination but also allow for formal vagueness. They will gravitate towards writing syntactic and semantic rules and compiling lexica, often needing their own sandbox and focusing on the precision part. Depending on how you handle communication and integration between the two camps, their collaboration can either block productivity or open up exciting opportunities.

In general, if you can inject a dose of pragmatism into the academic perfectionism of these folks and make them buy into the idea of serving real-life customers for whom NLP is but an intimidating buzzword, you can potentially create unique competitive advantage. But be aware that you have to sell them on an authentic vision — and, most importantly, follow through. Engaging in hard fundamental work without seeing its fruits for the business would be a demotivating experience for your talented team.

The second level involves training and tuning of models using existing algorithms. In practice, your team will spend most of their time on data preparation, training data creation and feature engineering. Once this is done, the actual AI part — training and tuning — can be relatively smooth. This is the level of many future-oriented, larger organizations that grant budget for in-house AI projects. Your people will be data scientists pushing the boundaries of open-source packages for NLP and/or machine learning, such as scikit-learn, spacy, pytorch and tensorflow. They will invent new and not always academically orthodox ways of extending training data, engineering features and surface-side tweaking. The goal is to train well-understood algorithms such as information extraction, categorisation and sentiment analysis, customized to the specific data at your company.

The good thing here is that there are plenty of great open-source packages out there that will still leave you enough flexibility to optimize on your specific use case. The risk is on the side of HR — many roads lead to NLP and data science. Data scientists are often self-taught and have a rather interdisciplinary background. While this often leads to highly diverse and creative teams, they will not always have the killer combination of innate intuition and academic rigor that the black belts earned during years of focused training. As deadlines and budgets tighten, your team might get loose on methods of training and evaluation and accumulate dangerous technical debt.

On the third level is a “black box” where you buy NLP and integrate it into you own tech stack. Your developers will mostly consume paid APIs that provide the standard algorithms out-of-the-box, such as Rosette, Semantria and Bitext (cf. this post for an extensive review of existing APIs). Ideally, your data scientists will be working along with business analysts or subject matter experts to squeeze out maximal value from the analysed data. For example, if you are doing legal contract analytics, your business analysts will design a model of the contract structure along with the different entities and relations that ought to appear in each section or paragraph of the document.

The rule of thumb at the black-box level is: buy NLP from black belts! With this understood, one of the obvious advantages of outsourcing NLP is that you don't run into the danger of diluting your technological focus. The downside is a lack of flexibility – mostly perceived after a certain time, when more and more business requirements have trickled through to your data folks. It is also advisable to invest into manual quality assurance to make sure the API outputs good quality for your specific data and use case.

So, where do you start? Here are some practical key takeaways:

- Talk to your tech folks about your business objectives, let them research and prototype and start out on level 2 or 3.

- Think in terms of the modern Pareto principle variation: 10% of effort account for 90% of the results. When you feel you are at 90%, take a break and observe the ROI of your efforts.

- Make sure your team doesn't transition to the low-level details of level 1 too early — this might lead to significant slips in time and budget since a huge amount of knowledge and training is required to succeed at this level.

- Don't fear — you can always consider a transition from 2 to 3, or vice versa, further down the path. Think about it - you will need to refactor your application anyway.

- If you manage to build up a compelling business case with NLP — welcome to the club, you can use it to attract first-class specialists and add to your uniqueness by transitioning to the black-belt camp!